About 70–85% of AI projects never make it to production or fail to deliver the promised results, according to research from McKinsey, Gartner, and Harvard Business Review. That’s a lot of wasted time, budget, and energy for companies that went in excited but unprepared.

For mid-level tech and ops leaders, AI implementation in business can feel like running a marathon at sprint speed. You’re asked to show measurable impact in months while working with small teams and sky-high expectations. Without a clear roadmap, it’s easy to hit the same walls that stop so many AI efforts.

This is where we’ve seen leaders succeed when they take a structured, business-first approach. At Aloa, we’ve helped businesses like yours with custom AI development services that focus on what matters most: aligning projects to real business goals, validating ideas quickly, and building solutions that fit how your company actually operates.

In this guide, we’ll break down six common but costly mistakes that can trip up AI initiatives. You’ll learn how to avoid them, see industry-specific examples, and get clear next steps to roll out AI that earns trust across your company.

How AI Implementation in Business Works

AI implementation in business means weaving AI tools into your company’s processes and decisions to boost efficiency, accuracy, and performance. It uses software that can learn, plan, and solve problems much like people do.

That sounds nice in theory, but what does it look like day-to-day? Think about a hospital using predictive analytics to flag high-risk patients before they need emergency care. Or a retailer using an AI chatbot to handle late-night customer questions while staff sleep. These are practical ways businesses fold AI into real operations.

Done right, AI becomes part of your workflow. Whether you use it to supply chain data to automate invoices, the goal isn’t to include AI for the sake of it; But rather, it’s about freeing up your energy to focus on higher-value work.

Mistake #1: Rushing Into AI Without Strategic Planning

One of the fastest ways to burn through money and patience is jumping into AI without a plan. It’s tempting to grab the latest tool or vendor because competitors are talking about it. But when AI projects aren’t tied to clear business objectives, they fizzle out or fail to deliver anything useful.

Successful AI implementation in business starts with alignment. What problem are you solving? Is it cutting manual data entry, improving patient care, or reducing fraud? Each goal demands different data, models, and workflows. Without that clarity, teams often chase generic “AI solutions” that never fit.

Consider a mid-sized retailer that buys an off-the-shelf recommendation engine expecting an instant sales boost. Without studying customer behavior or integrating online and in-store data, the system makes clumsy suggestions that frustrate shoppers instead of increasing revenue. Failures like this happen when businesses leap to technology before mapping their actual needs and constraints.

Strategic Planning by Company Size

How you build an AI strategy depends a lot on your company’s size and structure. A startup doesn’t need the same playbook as a 500-person enterprise. Here’s how different types of businesses can approach planning in a way that makes sense for their resources and goals:

- Smaller Teams: Start with one problem that directly impacts revenue or efficiency. For example, a small logistics firm could automate invoice matching with an AI workflow. This frees staff from repetitive tasks, saving hours each week without requiring a significant budget.

- Mid-Sized Companies: A medium-sized retail shop might create a cross-department AI task force that picks vendors, reviews pilots, and creates data-sharing guidelines. This keeps marketing, sales, and operations aligned so AI efforts work with existing processes.

- Larger Enterprises: Build a core AI center of excellence. For example, a regional bank might form a small internal AI team that develops fraud-detection models, provides training for different departments, and ensures regulatory compliance. Centralizing this avoids fragmentation and speeds up adoption across business units.

How to Run an AI Readiness Assessment

Even with a plan, you need to know where your company stands before building anything. An AI readiness assessment helps you see what’s working, what’s missing, and where you need support. It’s a quick way to avoid blind spots and set your project up for success:

- Review business priorities: Identify 2–3 goals where AI could drive measurable results. A hospital might pick reducing ER wait times; a manufacturer might target predictive maintenance to avoid downtime.

- Audit existing data and tools: Check if your data is clean, complete, and accessible. Does your sales data live in one CRM or five spreadsheets? Are there gaps that need fixing before training any model?

- Check team skills and gaps: Map out who understands data, analytics, or machine learning, and where outside support is needed. You might bring in an AI consultant or partner like Aloa’s AI consulting services for a proof-of-concept instead of making full-time hires right away.

- Set measurable success criteria: Define outcomes upfront. Instead of “improve efficiency,” say “reduce claims processing time by 25% in six months.” Specific targets keep projects focused and make it easier to prove ROI later.

Taking these steps slows things down at the start but saves months of rework later. When AI projects connect directly to business goals and are grounded in reality, it’s easier to measure ROI, secure buy-in, and avoid becoming part of that 70–85% failure statistic.

Mistake #2: Choosing the Wrong AI Technology or Partner

Another common pitfall is picking AI tools or vendors based on hype instead of fit. It’s easy to get impressed by slick demos or promises of “revolutionary” results. But if the technology doesn’t align with your business needs or the vendor can’t follow through, you’ll waste time and budget without solving real problems.

The root issue isn’t always bad intent from your vendor. Sometimes teams don’t ask the right questions up front or fail to test solutions before committing. A manufacturer could be signing with a vendor that lacks industry knowledge, which may lead to wasted spend and months of rework when the AI doesn't account for unique production workflows. That can be prevented with better due diligence.

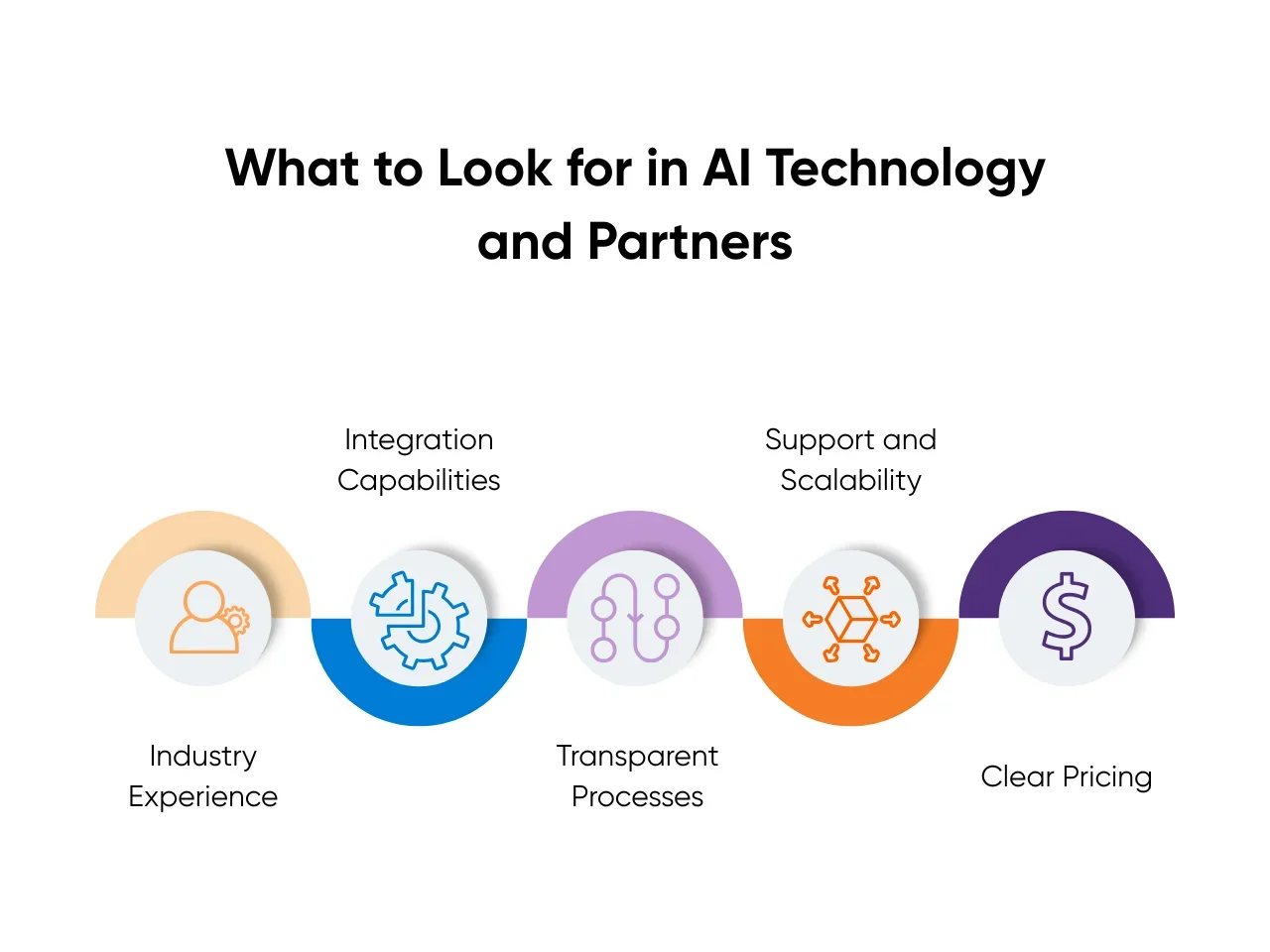

What to Look for in AI Technology and Partners

Not every tool or vendor will be right for your company. The best choices fit your goals, your data, and your team’s capabilities. Here’s how to vet them effectively:

- Industry Experience: Choose vendors with a track record in your field. A finance company selecting an AI fraud detection system should look for providers with proven results in similar regulatory environments. Ask for case studies relevant to your sector.

- Integration Capabilities: Make sure the tech can work with your existing systems. A retail chain investing in an AI recommendation engine needs APIs and integration support to connect online, POS, and inventory platforms.

- Transparent Processes: Ask how models are built and maintained. Can they explain how their AI algorithms make predictions? Vendors unwilling or unable to share this raise red flags.

- Support and Scalability: Look beyond the pilot. Does the vendor offer ongoing support and roadmap planning? Will their system scale as your needs grow? A tool that works for one department but stalls company-wide won’t deliver long-term value.

- Clear Pricing: Hidden costs can sink ROI. Push for transparent pricing, including data preparation, licensing, and support fees.

Taking time to evaluate these factors helps you avoid mismatches and sets realistic expectations about what the technology can deliver.

Proof-of-Concepts Matter

Even the most promising tool should be tested before a full rollout. At Aloa, we believe a proof-of-concept (POC) is one of the smartest ways to de-risk any AI project. It lets you see how the AI works with your data and workflows on a small scale, which reduces risk and builds internal buy-in:

- Define success criteria: Decide what outcomes you need from the pilot. For example, a hospital testing an AI triage tool might target reducing patient intake time by 15%.

- Use representative data: Test with real datasets, not vendor-provided samples. This highlights data quality or integration issues early.

- Involve end users: Get feedback from the people who’ll use the system daily. A POC for a customer service chatbot should include frontline agents to flag gaps and suggest improvements.

- Evaluate vendor collaboration: The POC phase shows how responsive and adaptable the vendor is. Do they adjust when issues come up or get defensive? That’s a clue to how they’ll behave post-sale.

- Measure and document results: Compare outcomes to your criteria before scaling. If targets aren’t met, adjust or walk away without being locked into a costly contract.

POCs may feel like extra when you strongly believe in what you’re building, but they save far more time and money than jumping straight into full deployment. Companies that take this step, something we’ve championed in countless projects, tend to see higher adoption rates and fewer costly surprises.

Taking a structured approach to vendor selection and validation helps ensure you choose partners and tools that deliver real business value. Instead of flashy demos, you’ll base decisions on fit, proof, and long-term potential, which will put your AI initiatives on steadier ground.

Mistake #3: Underestimating Data Requirements and Quality Issues

AI thrives on quality data. Yet many businesses jump into projects without a clear view of what data they have, where it lives, or how clean it is. When your models are trained on incomplete or inconsistent data, the results will always disappoint, no matter how advanced the algorithms.

This mistake shows up in many forms. A retailer might try predictive analytics with years of sales data, only to realize it’s riddled with gaps and duplicate entries. Or a healthcare provider might want an AI triage tool but find its patient records lack standardized formats across different departments.

Industry experts emphasize that data groundwork is one of the first and most critical steps in AI success. As IBM notes in its AI implementation guide, assessing what data you have, cleaning it, and ensuring integration across systems are non-negotiable. Without upfront work to prepare and govern data, AI initiatives can stall before they even begin.

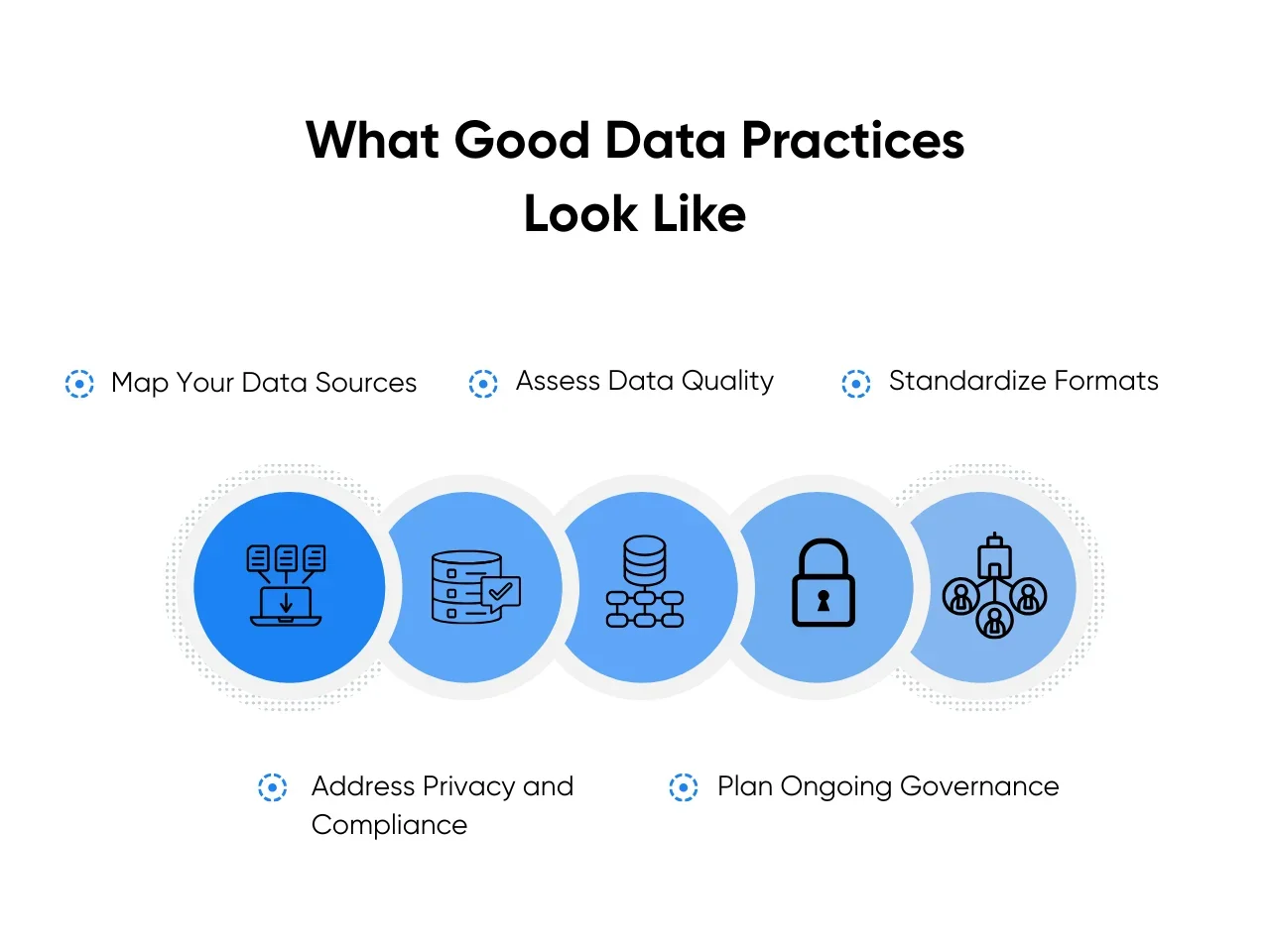

What Good Data Practices Look Like

High-performing AI projects start with clear plans for data sourcing, cleaning, and ongoing governance. Here are some essentials to focus on:

- Map your data sources: Identify where your relevant data lives (CRM, ERP, spreadsheets, external APIs). Understanding your data landscape prevents surprises down the road.

- Assess data quality: Look for gaps, inconsistencies, and errors. Are customer addresses missing? Are timestamps in different formats? Fixing these early saves significant time later.

- Standardize formats: Align naming conventions, units of measure, and data structures. For example, unify date formats or product codes across different departments.

- Address privacy and compliance: Especially critical for industries like healthcare and finance. Ensure any AI initiative respects regulations like HIPAA or GDPR and that sensitive data is handled responsibly.

- Plan ongoing governance: AI isn’t a one-and-done project. Establish who owns the data, how it will be maintained, and how changes will be documented over time.

When these fundamentals are neglected, models either fail outright or produce outputs that users don’t trust. Either outcome erodes adoption and wastes resources.

How to Right-Size Your Data Strategy

Getting your data AI-ready doesn’t mean tackling everything at once. At Aloa, we guide clients to start small and expand as they learn. Here’s how you can do the same:

- Tie data work to business goals: If your goal is to reduce fraud, prioritize transaction and user behavior data first. Don’t waste time cleaning unrelated datasets.

- Run a data readiness check: Audit your critical datasets for completeness, consistency, and accessibility. Tools for data profiling can help you flag issues fast.

- Pilot with a narrow scope: Test your AI model with a limited dataset or in one department. A manufacturer might pilot predictive maintenance on one production line before scaling plant-wide.

- Invest in the right skills: Data engineering and data science aren’t the same. Make sure your team or partner has expertise in preparing data for AI use cases.

- Document as you go: Keep a record of changes, cleaning methods, and assumptions. This not only supports compliance but also helps future projects avoid rework.

Treating data as a first-class citizen sets your AI initiatives up for success. Companies that handle this step well see faster deployments, more accurate models, and higher trust from end users.

Mistake #4: Ignoring Change Management and Employee Resistance

Many tech leaders focus on models and tools, but ignore the human side of AI. This mistake hits enterprises especially hard. Larger companies have more layers, more legacy processes, and more employees who need to understand and trust the changes. Even if the tech works perfectly, your AI initiative won’t succeed without proper change management.

Think about it: a hospital rolling out a scheduling AI might see great technical results in testing. But if nurses and staff feel blindsided, fear job loss, or don’t know how the tool helps them, they’ll resist using it. Or an enterprise retailer might launch a new AI-driven inventory system, only to find store managers quietly revert to spreadsheets because they were never trained or consulted.

Communicate AI Benefits and Address Employee Concerns

For AI adoption to work, you have to win hearts and minds as much as business cases. Here are some ways to make that happen:

- Explain the “why” clearly: Show how AI supports company goals and makes jobs easier. For example, automating repetitive data entry lets employees focus on higher-value work like customer experience.

- Be transparent about changes: Address fears openly. Will roles change? How will success be measured? Uncertainty fuels resistance; clarity builds trust.

- Tailor messages to different groups: Executives care about ROI, while front-line employees care about how their day-to-day will shift. AI personas can help you understand and segment these different stakeholder groups to adjust communications accordingly.

- Provide hands-on training: Don’t just share slide decks. Give employees time to try the tool and offer feedback before a full rollout.

These efforts prevent misinformation and anxiety from undermining your investment. When people see how AI helps them succeed, adoption accelerates.

Identify and Empower Internal AI Champions

Change sticks when it comes from within, not just top-down directives. At Aloa, we’ve seen the power of internal champions firsthand, and here’s how you can tap into that same energy in your own organization:

- Find respected employees early: Look for people trusted by peers, like team leads, informal influencers, or early adopters who are curious about AI.

- Involve them in pilots: Let champions test the AI in real workflows and provide honest feedback. Their insights make the solution more usable.

- Give them a voice: Encourage champions to share their experiences and wins with colleagues. Peer advocacy builds credibility faster than executive memos.

- Support them with resources: Provide champions with talking points, training, and access to decision-makers. Make it easy for them to help others adopt.

When internal advocates show how AI makes work easier or improves outcomes, employees follow. This approach turns potential resistance into momentum.

Ignoring the human side of AI creates hidden roadblocks. Planning for communication, training, and champions will help smooth adoption and ensure your technical success translates into faster workflows, cost savings, and happier customers.

Mistake #5: Failing to Establish Clear Success Metrics and ROI Measurement

An AI system can look great in a demo and still flop in production. The usual reason is fuzzy goals. Without clear metrics, you can’t prove value, tune models, or decide when to scale. For many smaller businesses, metrics feel new. It’s still worth doing them right for your AI initiative.

Here’s a quick example: a regional insurer pilots claims triage with solid model accuracy. Leadership asks for savings proof. The team never captured baseline handle time or rework rates. Funding pauses. A simple baseline and tracking plan could have shown cycle time cuts and reviewer hours saved. That’s the cost of skipping measurement.

Know the Difference: Technical vs Business Metrics

You need both sets of measures, but they serve different jobs. Use this checklist to align them:

- Technical Metrics: Accuracy, precision/recall, latency, uptime monitoring, and drift. For a chatbot, track response time and containment rate.

- Business Metrics: Cost per claim, first contact resolution, cycle time, revenue per session, charge capture, and error rate. These tie to AI operational efficiency.

- Map Tech to Business: Higher triage accuracy should cut manual reviews. Lower latency should reduce average handle time and lift customer satisfaction.

The takeaway: technical wins mean little without a business link. Make the connection explicit before you build.

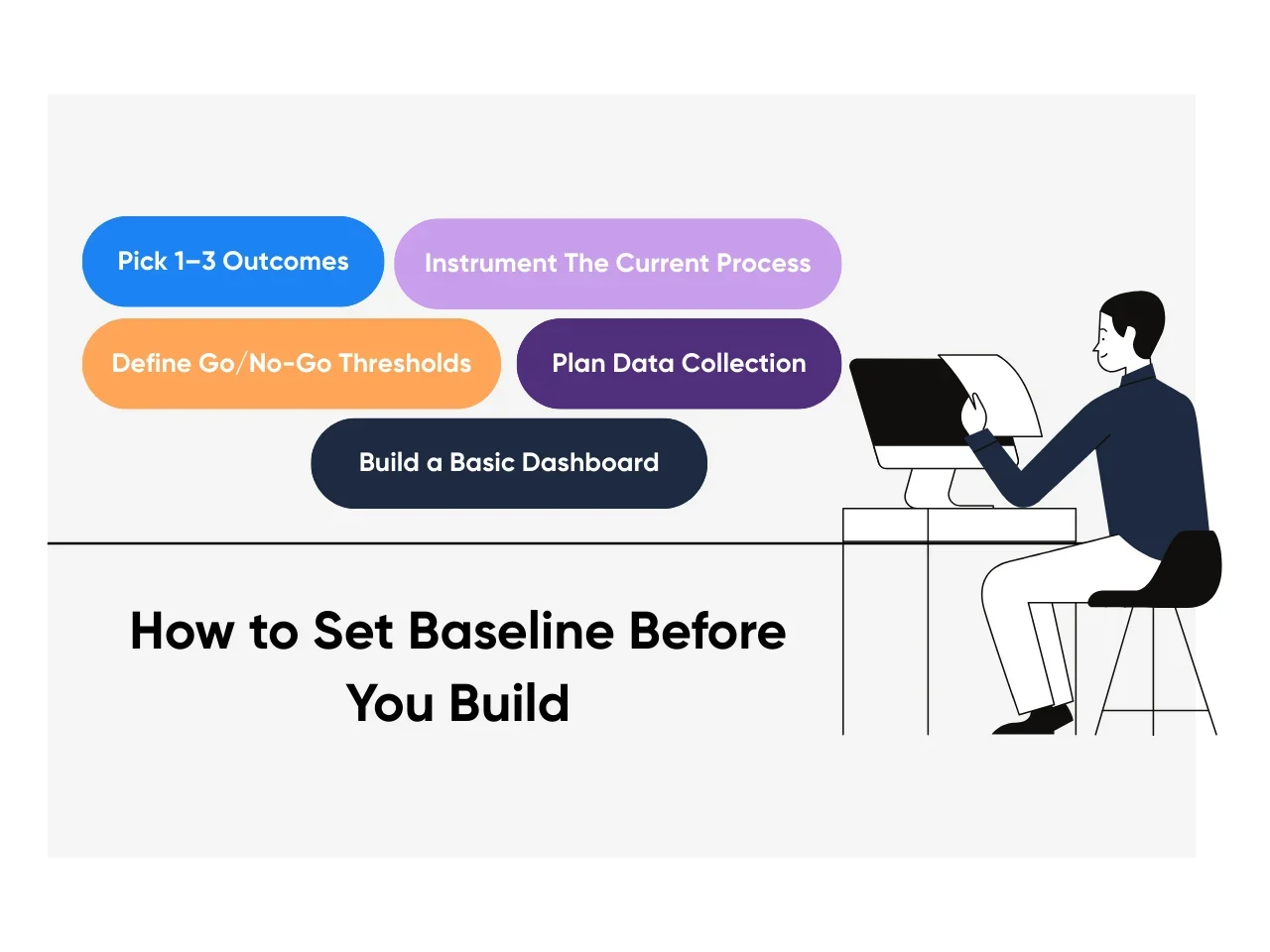

Set Baseline Before You Build

Even if your company lacks formal metrics, start here. Keep it simple and rigorous:

- Pick 1–3 outcomes: Examples include “reduce invoice processing time by 25%” or “cut stockouts by 15%.”

- Instrument the current process: Define start and stop times. Capture handle time, rework, and volume for two to four weeks.

- Define go/no-go thresholds: Set a minimum lift for deployment. For example, “10% cycle time cut within eight weeks.”

- Plan data collection: Decide who logs what, where it lives, and how often it updates.

- Build a basic dashboard: Show baselines, targets, and weekly trends. Google Sheets works to start; scale later.

At Aloa, we often run a short “metrics sprint” before any build. Our AI consulting and digital transformation teams can help you lock targets, baselines, and instrumentation fast.

Set Timelines and Keep Optimizing

Leaders want to know when results will show. Use staged checkpoints and keep tuning:

- Early Signals (Weeks 2–6): Adoption, time saved per task, data quality pass rates, and issue backlog burn-down.

- Mid Signals (6–12 Weeks): Productivity lift, error reduction, and cost avoided in target workflows.

- ROI Window (3–6 Months): Use a simple formula [(benefits − costs) / costs]. Include build, licenses, support, and training.

- Ongoing Monitoring: Review weekly, optimize monthly. Run A/B tests, track drift, and rotate a challenger model when performance dips.

- Operational Guardrails: Define rollback points. If cycle time or error rate regresses, pause and fix.

This cadence turns AI implementation challenges into a measurable, repeatable system. It gives leadership proof, your team a plan, and your AI room to improve.

For teams that want a faster path to numbers, we can help standardize dashboards and QA loops with workflow automation and lightweight internal tooling that fit your stack.

Mistake #6: Scaling Too Quickly Without Proper Testing and Validation

After a successful pilot, it’s tempting to go big right away. You see one department getting value, and leadership wants that across the company. But scaling AI too quickly, before proper testing and validation, often backfires.

A pilot that works in one use case doesn’t always translate company-wide. The AI might perform well with one dataset, but struggle when exposed to other systems or higher volumes. Models can even degrade over time if they aren’t retrained or monitored. Gartner reports that only about 48% of AI projects ever make it into production, and scaling across the enterprise is often where things break down. When this happens, confidence in AI takes a hit and momentum stalls.

Why Scaling Is Trickier Than It Looks

On paper, it feels simple: repeat what worked in the pilot. In reality, each department, market, or region has unique processes and data quirks. Let’s take a look at how this plays out across different industries, and why scaling is harder than many project managers expect:

- Healthcare: A diagnostic AI tool that performs well in one hospital may fail in another where patient demographics, EMR systems, and coding practices differ. A model trained on adult patient data could misclassify pediatric cases without re-validation.

- Finance: A fraud detection engine might be tuned for one country’s transaction patterns. When rolled out to new markets, different regulations and payment methods can cause a spike in false positives, frustrating customers and compliance teams.

- Retail: A recommendation engine that boosts e-commerce sales can struggle when expanded to physical stores. Store inventory turnover, point-of-sale integrations, and local shopping habits create new variables the pilot never faced.

- Manufacturing: A predictive maintenance model that worked for one production line may falter on others with different equipment or sensor setups. Without adjustments, this can lead to missed failures or unnecessary maintenance costs.

These examples highlight why scaling isn’t just “copy and paste.” New data, workflows, and user behaviors mean new risks. That’s why deliberate testing is critical before rolling out AI company-wide.

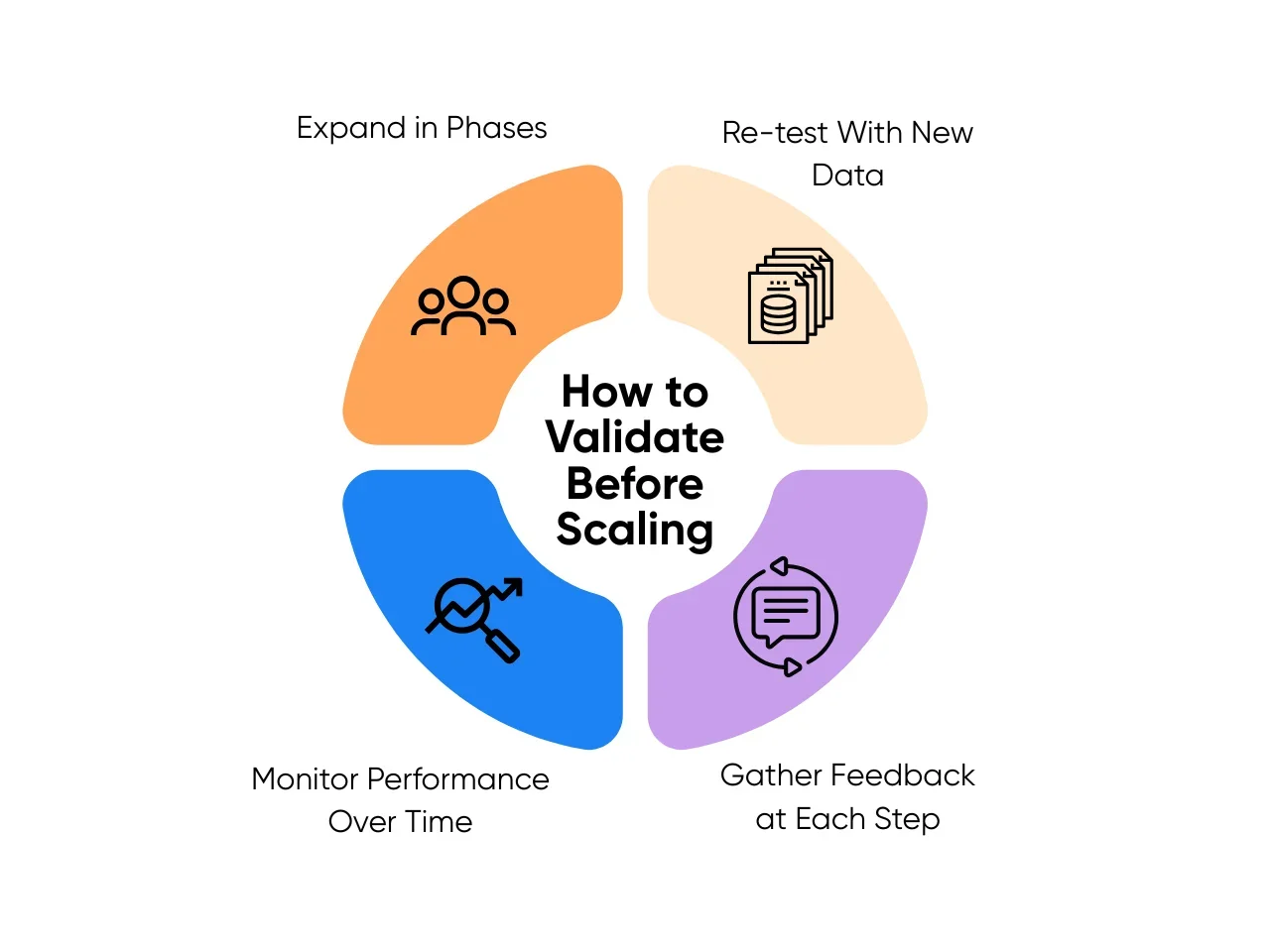

How to Validate Before Scaling

The good news? Scaling AI doesn’t have to be a gamble. Leaders who treat expansion like a structured experiment (rather than a victory lap) tend to see better results. Here’s how you can do the same:

- Expand in phases: Roll out to one new team, region, or product line at a time. A retail chain might test its AI pricing engine in 10 stores before expanding to 200. Watch how results differ and refine the model as you go.

- Re-test with new data: Validate models on fresh datasets from each expansion area. A bank adding AI to detect loan defaults should test against the credit behaviors of each new customer segment, not just the pilot group.

- Gather feedback at each step: Talk to frontline users early and often. For example, when scaling an AI triage system, involve nurses and doctors in each hospital to flag issues in real patient flows. In manufacturing, maintenance crews can surface gaps in predictions that the model missed.

- Monitor performance over time: Scaling isn’t “set it and forget it.” Establish dashboards that track model accuracy, business KPIs, and anomalies. Retrain models on a set schedule or when drift appears. This keeps the AI aligned with changing conditions, like seasonal retail demand or new finance regulations.

We’ve seen how phased rollouts and real-time monitoring make scaling safer and more successful. In our AI consulting projects, we guide leaders to validate in steps, not leaps, because sustainable adoption always beats fast but fragile.

By slowing down to test and validate, you’ll earn trust, cut costly rework, and help your AI deliver higher accuracy, smoother operations, and faster ROI across the business, not just in one lucky pilot.

Key Takeaways

AI implementation in business comes with high stakes. The six mistakes we’ve covered all come back to one thing: due diligence. Teams that take the time to plan, test, and listen deliver AI that sticks. Teams that don’t often burn through budget and goodwill with little to show for it.

As a business leader, you don’t need to be a machine learning expert to set your company up for a win. But you do need to ask the right questions, insist on proof before promises, and keep trying to understand what it is that you’re building. You’ll have the valuable insight to direct whether AI actually addresses what you need, and that makes all the difference between a costly AI failure and a successful AI initiative.

If you’re ready to turn AI strategy into results, start small, stay curious, and get the guidance you need from teams like Aloa. We’ve helped companies in healthcare, finance, retail, and manufacturing build AI that earns trust and delivers value. We’d love to hear what you’re working on and share what’s worked for others in your shoes.

Ready to move from ideas to impact? Schedule a call with Aloa and let’s map out your next step.